Forensic Evidence7

FRED COHEN

I.Basics

Digital forensic evidence is identified, collected, transported, stored, analyzed, interpreted, reconstructed, presented, and destroyed through a set of processes. Challenges to this evidence come through challenges to the elements of this process. This process, like all other processes and the people and systems that carry them out, is imperfect. That means that there are certain types of faults that occur in these processes.

A.Faults and Failures

Faults consist of intentional or accidental making or missing of content, contextual information, the meaning of content, process elements, relationships, ordering, timing, location, corroborating content, consistencies, and inconsistencies.

Not all faults produce failures, but some do. Although it may be possible to challenge faults, this generally does not work and is unethical if there is no corresponding failure in the process.

Certain things turn faults into failures, and it is these failures that legitimately should be and can be challenged in legal matters.Failures consist of false positives and false negatives._False negatives_are items that should have been found and dealt with in the process but were not, whereas_false positives_are things that should have been discarded or discredited in the process butwere not.

149

ProcessFaultsFailures

| Make/Miss |

|---|

| Content |

| Identification |

|---|

| Collection |

| Transport |

| Storage |

| Analysis |

| Interpretation |

| False + |

|---|

| False - |

| Presentation |

|---|

| Destruction |

Reconstruction

Accident/Intent

Figure 7.1Possible faults in failures in the processes of interacting with digital evidence.

B.Legal Issues

In the United States, evidence in legal cases is admitted or not based on the relative weights of its probative and prejudicial value._Probative value_is the extent to which the evidence leads to deeper understanding of the issues in the case._Prejudicial__value_is the extent to which it leads the finder of fact to believe one thing or another about the matter at hand. If the increased understanding from the evidence is greater than the increase in belief, the evidence is admissible.

Part of the issue of probative value is the quality of the evidence. If the process that created the evidence as presented is flawed, this reduces the probative value. Impure evidence, evidence presented by an expert who is shown to be unknowledgeable in the subject at hand, evidence that has not been retained in a proper chain of custody, evidence that fails to take into account the context, or evidence falling under any of the other fault categories described in Figure 7.1, all lead to reduced probative value. If the result of these faults produces wrong answers, the probative value goes to nearly zero in many cases.

C.The Latent Nature of Evidence

In order to deal with digital evidence, it must be presented in court. Because digital data is not directly observable by the finderof fact, it must be presented through expert witnesses using tools to reveal its existence, content, and meaning to the fact finders. This puts it into the category of latent evidence. In addition, digital evidence is hearsay evidence in that it is presented by an expert who asserts facts or conclusions based on what the computer recorded, not what they themselves have directly observed. In order for hearsay evidence to be admitted, it normally has to come in under the normal business records exemption tothe hearsay evidence prohibition. Thus, it depends on the quality and unbiased opinion of the experts for each side.

D.Notions Underlying "Good Practice"

One of the results of diverse approaches to collection and analysis of digital forensic evidence is that it has become increasingly difficult to show why the process used in any particular case is reliable, trustworthy, and accurate. As a result, sets of “good practices” were developed by law enforcement in the United Kingdom, United States, and elsewhere. The use of the termgood practices_is specifically designed to avoid the use of terms such as_standards_or_best practices; this is because of a desire to prevent challenges to evidence based on not following these practices.

The real situation is that there are no best practices or standards for what makes one approach to digital forensic evidence better or worse than another. In the end, what works is what counts. Because the law and the technology are not settled, many things may work in different situations, and to choose one over another would only muddy the waters.

Throughout this chapter I will comment on good practice, how and why deviations occur, and their implications. It is important in challenging evidence to seek out deviations from good practice, but it is also important to seek out reasons that these deviations are meaningful in terms of the basis of the challenge.

E.The Nature of Some Legal Systems and Refuting Challenges

In some legal systems, there are great rewards to those who challengeeverything. The idea is to spread the seeds of doubt in the minds of the finders of fact. In presenting and characterizing evidence, care should be taken to not mischaracterize, overcharacterize, or undercharacterize the value and meaning of evidence.

There are valid and reasonable challenges to digital evidence, and those challenges must be addressed by those presenting it, but in many cases the challenges performed by court-recognized, but inadequately knowledgeable experts are just plain wrong. In myexperience, such challenges are easily refuted and should be refuted.

Refuting clearly invalid challenges is often straightforward. In most such cases, ground truth can be clearly shown. As an example, when claims are made that the presence of a file indicates something unrelated to that file, a combination of manufacturers’ manuals and demonstrations readily destroy the credibility of the evidence and the person giving it. In one such case, a court-appointed special master made assertions that were clearly wrong. The combination of documentation and demonstration showed this expert for what he was and made a compelling case.

F.Overview

The rest of this chapter will focus on identifying sources of faults that occur in and among elements of the process andways that those faults turn into failures. The failures are then used to challenge the process. Challenges can be couched in terms of the process, the fault, and the resulting failure, and this makes for an effective presentation of the challenge.

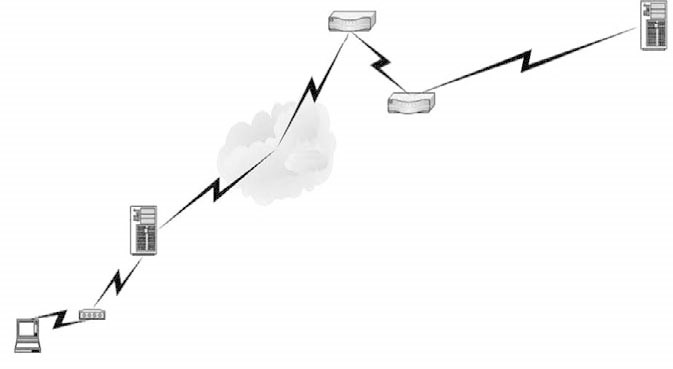

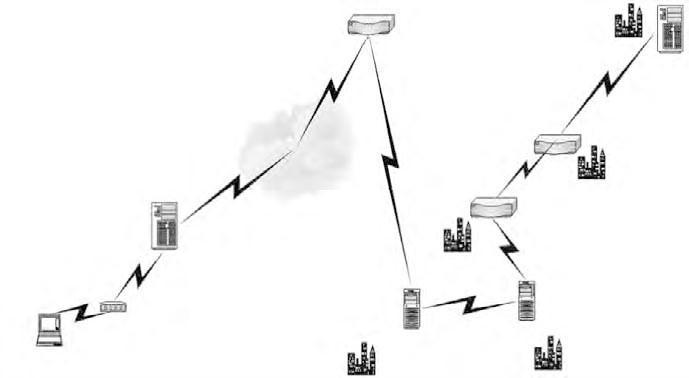

II.Identifying Evidence

The first step in gathering evidence is identifying possible sources of evidence for collection. It is fairly common that identified evidence includes too little or too much information. If too much is identified, then search and seizure limitations may be exceeded, whereas if too little is identified, then exculpatory or inculpatory evidence may be missed. The most common missed evidence comes in the form of network logs from related network components.

A.Common Misses

There is a greatdeal of corroborating evidence that can be sought from connected systems that produce log files, which can confirm or refute the use of a system by a suspect. If the evidence is not sought and the actions are in question, either in terms of taking place or in terms of their source, path, or content, the lack of intermediate audit trails may complicate the ability to definitively show what took place.

Other evidence that is commonly missed includes storage devices, networked computer contents, deleted fileareas from disks, secondary storage, backups, and other similar information. Properly identifying information to be collected often fails because of missed relationships between computers and evidence in those computers. This evidence is oftentimes sensitive and is lost if not identified and gathered within a short time frame.

Relevant information is often located in places not immediately evident from the original crime scene. In cases where evidence is stored for long periods and can be identified as missing in a timely fashion, the fault can usually be mitigated by additional collection. The time frame for much of this information is very limited, particularly in the case of server logs, connection logs, and similar network-related information. The chainof custody issues for such evidence can also be quite complex and involve a large number of participants from multiple jurisdictions.

B.Information Not Sought

In some cases evidence is not sought. For example, when one side or another looks for evidence in a case, they may decide to follow up or not follow up on different facets of the case, pursue or not pursue various lines of enquiry, or limit the level of detail or sort of evidence they collect. These represent intentional nonidentification of evidence. On the other hand, there are plenty of good investigators who miss all sorts of evidence for one reason or another. Evidence is often concealed and not found by investigators. Sometimes it is stored somewhere the investigators are unaware of or cannot gain access to. Sometimes the evidence is destroyed or no longer exists by the time it becomes apparent that it might be of value. These sorts of faults occur in every case, but they rarely rise to the level of a failure causing a substantial error in a case. People do their best or focus their attention on what they think is important, and sometimes they miss things. Time and resources are limited, so certain lines are not always pursued. That’s just how the world is.

C.False Evidence

On the other hand, there are also cases, rare as they may be, when evidence is made up from whole cloth. Although this is increasingly difficult to do in all areas, such evidence in the digital arena is exceedingly rare. Indeed, it is very hard to make updigital evidence and have it survive expert challenges, and I am aware of no case when this has been done. There are cases when the defense makes such a claim, and there are even cases when digital evidence has been found to not be adequately tied to the party involved, but no cases I am aware of have been successfully challenged on the basis that the evidence was constructed. Every claim of construction of this sort that I am aware of to date has been successfully refuted.

D.Nonstored Transient Information

Any data that is not stored in a permanent storage media cannot be seized; it can only be collected in real time by placing sensors in the environment. Such evidence must be identified in a different manner than evidence sitting on a desk or within a disk. This sort of evidence must be identified by an intelligence process, and special legal means must be applied in many cases to collect this evidence.

E.Good Practice

The general plan for good practice is to discover the computer(s) and/or other sourcesof content to be seized. To the extent that some source of evidence is not discovered, good practice is not followed. As a challenge, the sources of evidence not discovered may contain exculpatory content or other relevant material. It may seem obvious that anyone doing a search for digital evidence will try to find anything they can, but the technology of today leads to an enormous number of different devices that can be concealed in a wide variety of ways. Small digital cameras are commonly concealed in sprinkler systems, pictures, and similar places. A memory stick or SD drive can contain many megabytes of information and be the size of a fingernail or smaller. It is hard to find every piece of digital evidence, and harder still if it is intentionally concealed.

It is good practice to seize the main system box, monitor, keyboard, mouse, leads and cables, power supplies, connectors, modems, floppy disks, DATs, tapes, Jazz and Zip disks and drives, CDs, hard disks, manuals and software, papers, circuit boards, keys, printers, printouts, and printer paper. Also seize mobile phones, pagers, organizers, palm computers, land-line telephones, answering machines, audio tapes and recorders, digital cameras, PCMCIA cards, integrated circuits, credit cards, smart cards, facsimile machines, and dictating machines. All of these items may contain digital forensic evidence and may be useful in getting the system to operate again. A good rule of thumb is, “If in doubt, seize it.”

III.Evidence Collection

Most evidence is collected electronically. In other words, the process by which it is gathered is through the collection of electromagnetic emanations. In order to trust evidence, there needs to be some basis for the manner in which it was collected. For example, it would be important to establish that it comes from a particular system at which the user sits. This implies some sort of evidence of presence in front of the computer at a given time.

A.Establishing Presence

Records of activity are often used to establish presence. For example, users may have passwords that are used to authenticate their identity. These may be stored locally or remotely and will typically provide date and times associated with the start of access, as well as with subsequent accesses. The verification process provides evidence of the presence of the individual at a time and place; however, such validations can be forged, stolen, and lent. In some environments common passwords and user IDs are used, making these identifications less reliable.

B.Chain of Custody

Digital forensic evidence comes in a wide range of forms from a wide range of sources. For example, in a recent terrorism case a computer asserted to be from a defendant was provided to the FBI by someone who purchased the computer at a swap meet. These are generally outdoor small vendor sales of used equipment of all sorts — from old guns to old electronic equipment — sold over folding tables and from the backs of cars. Some of it is stolen, some of it is resold by people who bought new versions, some is wholesale, some are damaged goods, and some is made by those who sell them. This computer was asserted to contain evidence, but establishing a chain of custody was a very difficult proposition, especially considering that the defendant claimed to never havehad such a computer.

C.How the Evidence Was Created

The information that becomes evidence may be generated for various purposes, most of which are not for the purpose of presentation in court. Although the business records exception to the hearsay rule applies to normal business records, many other sorts of records may not be allowed in, depending on how they are created, collected, maintained, and presented and by whom. In most cases when information is gathered from systems as they operate, the systemsunder scrutiny are altered during the gathering process. Although this does not necessarily taint the evidence, it provides an opportunity for tainting that should not be overlooked if there is a reason to believe that tainting may have taken place.

D.Typical Audit Trails

Typical audit trails include the date and time of creation, last use, and/or modification as well as identification information such as program names, function performed, user names, owners, groups, IP addresses, port numbers, protocol types, portions or all of the content, and protection settings. If this sort of information exists, it should be consistent to a reasonable extent across different elements of the system under scrutiny.

E.Consistency of Evidence

For example, if a program isasserted to generate a file that was not otherwise altered, then the program must have been running at the time the file was created, must have had the necessary permissions to create the file, must have the capacity to create such a file in such a format, and must have been invoked by a user or the system using another program capable of invoking it. There is a lot of information that should all link together cleanly, and if it doesn’t, there are reasons to question it.

This is not to say that all of these records always exist in the proper order on all systems. For various reasons, some records get lost, others end up out of order, and times fluctuate to some extent; however, these are all within some reasonable tolerance, and substantial deviations are often detectable. Such deviations are indicators that things are not what they seem, and in such cases alternative explanations are available and should be pursued.

F.Proper Handling during Collection

In most police-driven investigations normal evidence-handling requirements are used for digital forensic evidence, with a few enhancements and exceptions. Photographs and labels are commonly used, and an inventory sheet is typically made of all seized evidence. Suspects and others at the location under investigation are interviewed, passwords and similar information are retrieved, and in some cases this is used on-site to gain access to computer systems. If proper procedures are not followed, then the evidence arising from this process may be invalidated. For example, if a suspect is arrested and not Mirandaized and asked for a password to a computer system, then all of the evidence from that system may become unavailable for prosecution if the password is used to gain access.

G.Selective Collection and Presentation

In some cases, prosecution teams have opted to not do a thorough job of collecting or presenting evidence. They prefer to seek out anything that makes the defendant look guilty and stop as soon as they reach a threshold required to bring the case tocourt. Many prosecution teams try to prevent the defense from getting the evidence, provide only paper copies of digital evidence, and so forth. In such cases the defense should vigorously challenge the courts to require that the prosecution present all ofthe evidence gathered in the same form as it was made available to them and for a similar amount of time. On the other hand, most defense teams fail to present evidence that would tend to convict their clients, and they certainly don’t try to help the prosecution find more evidence against their clients.

A good example of such an attempt involved the prosecution providing a printout listing the files on a disk. The printout was hundreds of pages long, contained no useful information, and could not be processed automatically. The defense in this case brought forth arguments that this was unfair and that the evidence should not be admitted at all unless the defense had adequate access to it. The issue of best evidence was also brought up. A paper copy of an extract from original electronic media is not best evidence and should not be allowed to be used when the original and more accurate copies are available and can be provided. This discussion is not intended to indicate that such behavior is limited to prosecution teams. Defense teams also do everything they can to limit discovery and make it as ineffective as possible for the other side. But because the prosecution is the predominant gatherer of digital forensic evidence in most criminal cases, it ends up being the prosecution that conceals and the defense that tries to reveal.

H.Forensic Imaging

In order to address decay and corruption of original evidence, common practice is to image the contents of digital evidence and work with the image instead of the original. Imaging must be done in such a way as to accurately reflect the original content, and there are now studies done by the United States National Institute of Standards and Technology (NIST) to understand the limitations of imaging hardware and software, as well as standards for forensic imaging. If these standards are not met, there may be a challenge to the evidence; however, such challenges can often be defeated if proper experts are properly applied.

In at least one case, a disk dump (dd) image of a disk was thrown out because some versions of the dd program operating on some disks fail to capture the last sector of the disk. This is rare, and in the particular case no finding was made to indicate thatthis had happened, yet the people who were trying to get the evidence excluded won, presumably because of the incompetence of the side trying to get the evidence in. Here are just some of the counterarguments.

The original evidence disk is normally seizedand retained. If it is still there, it can be reimaged and the full content examined. The image with dd is only inaccurate on disks of certain sizes, and because the disk in this case has not been shown to be such a disk, the image can be shown to be accurate. The image taken with dd is accurate except for that last sector, so all of the evidence provided using it is still accurate. If the other side wants to assert that there is some evidence in the last sector that makes a difference, they can feel freeto, but nothing there invalidates the evidence that does exist.

In many cases it can be demonstrated that the last sector of a disk does not have any relevant evidence because many operating systems use it for redundant copies of other data, in which casethe contents can be accurately reconstructed. It turns out that every current imaging product has been shown to have similar flaws under some circumstances. None of them are more accurate than dd according to NIST, so unless all such evidence is to be ignored, this evidence must be allowed. But in this case it seems clear that justice was not served, and the process failed.

Proper technique in forensic imaging starts with a clean palate for the results of the image. Typically, to assure that no evidence isleft over from previous content of the media, the media is first cleared of data through a forensically sound erasure process. This is often not done. After clearing of the information, it is common to put a known pattern that is unlikely to appear in normal evidence on the media to later detect failures to properly image the media. After verifying this content is correct, the image is then taken. The original media is cryptographically checksummed, either in parts or as a whole, the image is made, then theresult is verified with the cryptographic checksums. The result can be tested for the presence of the identifiable cleared content, and the start and end of the evidence can be clearly verified by these patterns.

Although failure to do any of these stepsdoes not invalidate the image, they do bring into question the potential for contamination. Similarly, cryptographic checksums can be questioned as can the validity of the mechanisms for extracting and storing data on the media, but these challenges are rarely likely to succeed against a competent forensic imaging expert because the processes are so effective and hard to refute. Perhaps the most promising area for technical challenges in cases where proper technique was used lies in the potential for disk content as reflected at the normal interface to fail to reflect accurately the content of the physical media. This is because of the electronics that mediates between the interface and the media. Such challenges have never been made and would require a veryhigh degree of expertise and great expense; however, it is a potential that has not yet been explored.

I.Nonstored Transient Information

The collection of nonstored transient evidence typically involves a technical collection mechanism and often requiresminimization in a law enforcement context. In the case of analog telephony, tape recorders and special electronics are used; whereas, in the case of digital traffic, the typical tool is a packet sniffer. Most packet sniffers have limitations in the form of collection rates, storage capacity, and ability to capture all packets. These limitations may be the basis for challenges involving missed information. Created data is far more difficult to deal with in a packet-sniffing technology. These technologies typically record what is sent through the media, but attribution to a source is more problematic.

Typical Ethernet interfaces use MAC addresses associated with packets to identify the hardware device associated with a transmission. Although these are manufacturer-specified serial numbers that are unique to a physical device, they can also be forged with special software. If the environment was not examined for the presence of such software and if other hardware is present, it is a reasonable challenge to assert that the data may not have come from the identified computer. In the absence of corroborating evidence, tying traffic to a computer is not directly evident.

In order to assert that such data is legitimate as evidence, there is a requirement that the manner in which it was gathered be demonstrated to be reliable. In cases involving communications media, there may also be requirements to follow wiretap laws as opposed to other laws, but other examples such as radar and infrared imaging, tape recording, andso forth may all involve digital forensic evidence.

This sort of evidence is also stored by the collection mechanisms in media with specific formats and characteristics and can be altered. Again, the evidence has many characteristics that allow it to be examined by experts to determine whether any obvious alteration has taken place. Many examples of this sort of content now exist because of the ready availability of computers with image and sound manipulation programs. In some cases people alter voices bycombining recordings or reordering portions of them; pictures can be easily merged or altered to create false backgrounds and contexts; so-called morphing can be used to make characteristics seem similar; and digital artists can be quite skilled at creating digital renderings. Tools exist for creating shadows and similar realistic patterns and are relatively easy to use and inexpensive.

J.Secret Science and Countermeasures

This is another similar line of pursuit that has been used to prevent criminal defense teams from gaining access to key evidence and methods of gathering and analyzing evidence. In essence, the prosecution says that they have an expert who used a secret technique to determine that the defendant typed this or that. The defense asks for access to the means and detailed evidence so that they can try to refute the evidence, and the prosecution claims that this information is a government secret, classified at a level so that the defense team cannot see it. In addition, because of the way classifications work and because of a concerted effort by those who represent the government, anyone who works for a defendant is prevented from access to many of the people and techniques used by prosecution teams. Secret science presented by secret scientistsis presented as objective fact that cannot be challenged. Even worse, some trial judges have let such evidence in.

There are some methods for countering such abuses, and they should be pursued with great vigor. One method is to have a digital forensic evidence expert on your team that has security clearances. This may be hard to do because very few such experts exist; however, there are some available. With such a person available, the secrecy argument can largely be eliminated from the process of examining the evidence, but the problem remains of how to try the case. Is the defense expert going to say that the prosecution expert is wrong and provide no details? This would seem ridiculous, and yet it may be the only alternative. Another alternative is to have this part of the case tried before the judge with a resulting stipulation. Yet another alternative is to ask revealing questions that don’t violate the secrecy requirements while still getting at the fundamental issues in the case.

For example, a lawyermight go through each of the known technologies for gathering and analyzing the evidence and ask whether each of them was used. Even if there is no response, each can be pursued for its potential flaws with questions that the expert may or may not be ableto answer. The opposing expert can then address the flaws associated with the technologies and indicate whether any of them may have been present in this matter without revealing which ones are relevant and thus without revealing the secrets. The best course would be to have the evidence thrown out for any of the following reasons: (1) It is not best evidence; (2) it is hearsay; (3) it is highly prejudicial; (4) it was not made available to the defense, thus preventing the defendant from a fair trial; (5)its scientific validity has not been established; (6) the expert has not been shown to be an expert in the particular type of evidence under scrutiny; and (7) the defense has been denied the right to challenge the scientific evidence. If the case can be made that it is more prejudicial than probative, the case stands a chance — if not in court, then on appeal.

IV.Seizure Errors

The evidence seizure process has the potential of producing a wide range of errors that may lead to challenges. Search and seizure laws may mandate Title 3 searches for live capture of digital data passing through telecommunications channels; permission for searches may be removed at any time during a permission search, and continued searching at that time may violate rules of evidence; search warrants must be adequately specific to avoid becoming fishing expeditions; and the searches must be limited to meet the requirements of the warrant if a warrant exists. Hot pursuit laws rarely apply to digital evidence, but the laws regarding plain sight are far more complex. There have been cases that have gone both ways in searches of digital media for the purpose of seizure.

A.Warrant Scope Excess

In one case a warrant for a search for pornographic images was found to be exceeded when the officer making the search looked in directories with names that were indicative of other legitimate use. Of course, this is patently ridiculous because plenty of criminals have been found to store pornographic images under false names, in hidden files, in directories that hold legitimate images, and so forth, but the challenge worked because the judge was convinced. This is the combined result of a poorly written search warrant and a poorly educated judiciary, and this case was one of the earliest ones in this area, so such errors are likely on all sides.

There are legitimate cases on both sides, not all judges will rule the same way on the same information, and not all experts do the same things on the same cases. This sort of variation is in the nature of the work and is to be expected. Challenges should be undertaken when the evidence search and seizure process used in a nonpermission search fails to meet the reasonable requirements, when search warrant bounds are exceeded, when minimization is not adequately applied, and whenever evidence is found in a search that does not meet the original warrant and the search is not immediately stopped pending a new search warrant or the new sorts of information. In permission searches there is normally a scope of permission, and, unless it is unlimited, it may have the same problems as a warrant search in terms of admissibility.

B.Acting forLaw Enforcement

Similar limitations exist for situations in which a non–law enforcement person is acting on behalf of law enforcement or the government. In most cases when a private individual undertakes a search of their own volition and reports results to law enforcement, there is no problem associated with illegal search and seizure, although the purity of the evidence may of course come into question. But in cases where there was a preexisting relationship with law enforcement, when the specific case was under discussion between law enforcement and the person doing the search, or when the search was ordered by someone who was in touch with law enforcement on the matter, there may be an issue of admissibility under this provision.

C.Wiretap Limitations and Title 3

In some cases where a wiretap or network tap is used, there may also be issues associated with the legality of such a wiretap. There are many states in which all parties to a communication must give permission for a recording. Without such permission, the recording may be inadmissible, and the person doing the recording may be legally liable for a criminal act. The laws on real-time collection are not very clear, and inadequate case law exists at this time; however, this makes an ideal situationfor attempting to challenge evidence. The expertise of the person gathering the evidence is important to examine. In addition, if minimization is done, then an argument can sometimes be made that the exculpatory evidence was excluded in the gathering phase. This depends heavily on the specifics of the situation.

D.Detecting Alteration

Detecting alteration is very similar to the field of questioned documents, except that in this case the documents are digital rather than analog. There is a lot of tradecraftinvolved in trying to figure out whether there are forgeries and what is real and fake in such digital images of real-world events. For example, the locations of light sources and their makeup generate complex patterns of shadows that can often be tracedto specifics. Specific imaging technologies leave specific headers and other indicators in the image files that result from their use. Aliasing properties of digitizers, start and stop transients, scratches on lenses, frequency characteristics of pickups,and similar information often yields forgeries and digital alterations readily detectable by sufficiently skilled experts. As in other forms of digital forensic evidence, the cases where this sort of examination is relevant to the matter at hand are rare,but there are times when such analysis yields a valid challenge.

This particular sort of challenge has a tendency to appear more often in civil suits than in criminal cases because it is rare to find an instance where a member of the prosecution team creates such a forgery, and defense teams haven’t the desire, time, or money to create such a forgery.

In civil matters, however, these sorts of situations are far more common. For example there are many cases in which a celebrity’s head is placed on a naked body for the purposes of increased sales of pornographic material, and these forgeries tend to be readily detectable.

Another interesting example was the case of a digital image purported to be one of the airplanes photographedfrom the top of one of the World Trade Center towers as it was about to hit the building. This was asserted to be evidence that the Masaad (the Israeli secret service) was a co-conspirator in the September 11, 2001, attacks. The forgery was rapidly detected by an examiner who found many errors in the rendering, including wrong light direction for the time of day, improper scaling for the angle and distance, shadowing errors, edge line aliasing errors, and so forth.

E.Collection Limits

Because all collection methods are physical, there are inherent physical limits in the collection of digital evidence. The digitization process further introduces sources of low-level errors because of the rounding effects associated with clocks relative to time bases and voltages or currents with respect to bit values. The challenge evidence collected based on signals approaching these limits is typically based on the inability of the mechanism used to gather the evidence to accurately represent and collect the underlying reality it is intended to reflect. Error-correction mechanisms often imply changes to underlying physical data to regain consistency, and they produce a probabilistic and increasing chance of error as the physical signals approach these limits.

F.Good Practice

It is good practice to secure the scene and move people away from computers and power. This is a basic safety issue and assures that the people, investigators, and equipment are protected. Failure to do so is not likely to produce any false evidence, but it may result in the loss of otherwise valid evidence.

The investigator should not turn on the computer if it is off, not touch the keyboard if the computer is on, and not take advice from its owner or user. Clearly, a computer that is not turned on should not be turned on because this is very likely to produce alterations to the computer that may destroy its evidentiary value. Not touching the keyboard is somewhat more problematic. For example, many systems use a screen saver to lockout users after inactivity, but in the vast majority of cases, it is better to leave the keyboard and mouse alone. In terms of taking advice from the owner, more care may be necessary. Many systems are interconnected via the Internet, and if the user asserts some potential for harm associated with actions and that harm takes place, liability may be accrued. Still, such information should be passed through an experienced investigator and not taken out of hand. This is a place where good judgment may be important, and, of course, judgments are always within the realm of being challenged.

The screen should be photographed or its content noted, the printer or similar output processes should be allowed to finish, and the equipment should be powered off by pullingout all plugs. Taking a photograph or making notes of what is on the screen is certainly a reasonable step and cannot reasonably be expected to destroy or create evidence. Indeed it is likely to assure purity and consistency. Allowing output to finish mayleave time for other undesirable alterations to the system. However, it may also provide additional evidence. If the system is networked, this becomes a more complex issue. For example, a remote user might alter the system after finding out that the normal user is not there, or even observe what is happening via an electronic video link. Powering off systems may also create problems, particularly if these systems act as part of the infrastructure of an enterprise. For example, this could cause all Internetaccess to many domains to fail or cause loss of substantial amounts of data. In some data centers with large numbers of computers, this is simply infeasible or so destructive as to be imprudent.

The investigator should label and photograph or videotape all components; remove and label all connection cables; remove all equipment, label, and record details; and note serial numbers and other identifying information associated with each component. The area should be searched for diaries, notebooks, papers, andfor passwords or other similar notes. The user should be asked for any passwords, and these should be recorded. This process is clearly prudent and, to the extent that something is not photographed or labeled, it may lead to challenges. Wrong serial numbers, missing serial numbers, and similar errors may destroy the chain of evidence or lead to challenges about what was really present. At a minimum, these sorts of misses create unnecessary problems later in the case.

G.Fault Type Review

Faults in collection are most commonly misses of content, process failures or inaccuracies, missed opportunities caused by inadequate collection technology or skill, missed relationships, missed timing information, missed location information, missed locations containing information, missed corroborating content, and missed consistencies.

V.Transport of Evidence

When digital evidence is taken into custody, appropriate measures should be taken to assure that it is not damaged or destroyed, that it is properly labeled and kept together, and that it is not mixed up or otherwise tainted. If these precautions are not taken, the results can be effectively challenged.

A.Possession and Chain of Custody

It is common practice in some venues to videotape the evidence collection process, and this has been invaluable in meeting subsequent challenges in many cases. In one example, a challenge was made based on the presence of a floppy disk in a floppy disk drive; however, the videotape clearly showed that no floppy disk was present, and this defeated the assertion.

B.Packaging for Transport

Packaging for transport of digital forensic evidence has requirements similar to those of other evidence. The evidence should be transported in a timely fashion to a facility where it can be logged into an evidence locker. Chain-of-custody requirements must be met throughout the process, and the evidence has to be kept in a suitable environment to the preservation of its contents.

If a claim of evidence tampering is to be made, this will have to be shown to have taken place when it was in the custody of the person who is asserted to have taken this action. In one case we were able to show that the amount of time available toan individual accused of tampering was inadequate to have planted the evidence asserted to have been planted. Tampering is not an easy thing to do without detection. Because of all of the inherent redundancy associated with digital forensic evidence, as described earlier, tampering can often be detected by a detailed enough examination.

C.Due Care Takes Time

Based on the requirement for a speedy trial and high workloads in most forensic laboratories, time constraints are often placed on storage and analysis of evidence. A job done quickly normally translates into a job done less thoroughly than it might otherwise have been done. The more time spent, the more detailed an examination can be made and the more of the overall mosaic will be pieced together. In practice, most cases are made with a minimum of time and effort on such evidence, and this opens the opportunity for errors and the resulting opportunity for challenges.

D.Good Practice

Transportation should be done with the following good practice elements. Handle everything with care; keep it away from magnetic sources such as loudspeakers, heated seats, and radios; place boards and disks in antistatic bags; transport monitors face down buckled into seats; place organizers and palmtops in envelopes; and place keyboards, leads, mouse, and modems in aerated bags.

VI.Storage of Evidence

Evidence must be stored in a safe, secure environment to assure that it is safe from alteration. Access must be controlled and logged in most cases. But this is not enoughfor most digital evidence. Special precautions are needed to protect this evidence, just as special precautions are needed for some sorts of biological and chemical evidence.

A.Decay with Time

All media decays with time. Decay of media produces errors. Typically, tapes, CDs, and disks last 1 to 3 years if kept well but can fail in minutes from excessive heat (e.g., in a car on a sunny day, on a radiator, or in a fire). Electromagnetic effects can cause damage in seconds, as can high impulses or overwriting of content. Non-acid paper can last for hundreds of years but can also fail in minutes from excessive heat or in seconds from shredding or being eaten. An audit trail is another thing that tends to decay with time.

Some are never stored, whereas others last minutes, hours, days, weeks, months, or years.

B.Evidence of Integrity

Evidence of integrity is normally used to assert that digital forensic evidence is what it should be. This is generallyassured by using a combination of notes taken while the data was extracted; using a well-understood and well-tested process of collection; being able to reproduce results, which is a scientific validity requirement in any case; using chain-of-custody records and procedures; and applying proper imaging techniques associated with the specific media under examination.

The establishment of purity of evidence is generally better if established earlier in the process. The media being imaged or analyzed should bewrite-protected so that accidental overwrite cannot happen. A cryptographic checksum should be taken as soon as feasible to allow content to be verified as free from alteration at a later date. It may also be wise to do cryptographic checksums on a block-by-block and file-by-file basis to assure that even if part of the evidence becomes corrupt or loses integrity with time, the specific evidence is covered by additional codes. This allows us to assure the freedom from alteration of portions of a large media even if the overall media becomes corrupt. Keeping the original pure by only using it to generate an initial image and working only from images from then on is a wise move when feasible. Validating purity over time also helps to assure that time is not wasted and that no alteration occurs in the analytical process.

Nobody knows for certain that any evidence is completely pure and free from alteration, and likely nobody ever will. But this does not mean that all evidence can be successfully challenged or should be. Just because people are not perfect, that doesn’t mean they are not good enough. Just because evidence is not perfect, that doesn’t mean it is not good enough.

C.Principles of Best Practices

Principle 1: No action should change data held on a computer or other media.

Principle 2: In exceptional circumstances where examination of original evidence is required, the examiner must be competent to examine it and explain its relevance and implications.

Principle 3: Audit records or other records of allprocesses applied to digital evidence should be created and preserved. An independent third party should be able to reproduce those actions with similar results.

Principle 4: Some individual person should be responsible for adhering to these principles.

VII. Evidence Analysis

Evidence analysis is perhaps the most complex and error-prone aspect of digital evidence. It is also the most subjectively applied in many cases. But in almost all cases it should not and need not be subjective. It is subjective largely because of the failure of those undertaking analysis to spend the time and effort to be careful in what they do.

A.Content

Making content typically involves processing errors. For example, an unclean palate is used in the analysis process, and the analysis finds evidence that was left over from a previous case. This was addressed under imaging, discussed earlier. The challenge to this can come in many forms, and if original evidence or cryptographic checksums are not used, such challenges have a good chance of success because of the inability to independently verify results. If originals are present and checksums can be shown to match, then such challenges will only succeed in the presence of actual and material error because the validity of the evidence can be properly established.

Missing content typically results from limited time or excessive focus of attention. Limited time is almost always an issue because there is usually an enormous amount of evidence present, most of which can only be peripherally examined with simpletools. Examining every bit pattern from every possible perspective is simply too time-consuming to be feasible and is almost never necessary to get to the heart of the evidence. Excessive focus, on the other hand, is easier to avoid. By simply taking an open view of what could be meaningful evidence and being thorough in the evaluation process, such misses are avoidable. The challenge is simple. Did you look at everything? Is there any exculpatory evidence? Where did you look? Why did you not look in the other places? What technique did you use? Why did you not use a more definitive technique? Is there a more definitive technique? The questions can be nearly endless.

B.Contextual Information

Information has meaning only in context. Analysis can make context by making assumptions that are invalid or cannot be demonstrated. Context is missed when assumptions that are valid and can be demonstrated are not made. The challenge to context that has been made starts with questioning the basis for assumptions. If assumptions cannot be adequately demonstrated, the context becomes dubious, the assumptions fall away, and the conclusions are not demonstrable. If an alternative context can be demonstrated with the same or better basis, that context can be substituted andthe interpretation of the evidence altered. Missed context can be challenged with the introduction of alternative contexts. It then becomes the challenge of the other side to disprove these contexts.

An excellent example of this was a case in England where the defense challenged the validity of the evidence by introducing the potential that an attack on the computer system being examined caused the illicit effects rather than the user at the console. In the end, the prosecution could not convincingly demonstrate the validity of its assumption that the user who owned the computer carried out the behavior in question, and the case was dismissed. Although many computer security experts assert that there was no evidence of the presence of such an attack, the lack of a demonstration of its presence by the defense is not the same as the demonstration of its absence by the prosecution.

C.Meaning

The meaning of things that are found is obviously the basis for interpretation. Meaning that is missed leads to a failure to interpret, and meaning that is made is an interpretation without adequate support.

For example, the presence of a file with a name associated with a particular program might indicate that the program was present at some time in the past, but not necessarily. The filename could be a coincidence or it could have been placed there by other means, such as part of a backup or restoration process. In most cases there are a variety of different meanings that can be applied to content, and determining the most likely meaning typically involves reviewing the different possible meanings relative to the rest of the environment. The same applies to the context, presence or absence of files, directories, packets, or any other things found in material under examination.

D.Process Elements

Content does not come to exist through magic. It comes to exist through a process. The notion that a sequence of bits appears on a system without the notion of how that sequencecame to exist there makes for a very weak case. If the bits were created within the system, the means for their creation should be there unless it was somehow removed. If the bits were obtained from somewhere else, the process by which they got there should be identified. If there are alternative explanations for the arrival of the bit sequence, why is one interpretation better than the other?

Processes normally generate audit records of some sort somewhere. Files have times associated with them in most file systems. If a file was retrieved from a network, audit records from the location it came from and the connection to the network may be recorded. If some image was deleted and the residual information from it is gone, there must be some process by whichthat event sequence occurred. Without evidence of the process, alternative explanations may be offered with as much credibility as the explanation preferred by one side. The plausibility of these explanations is key to the meaning of the content they referto.

E.Relationships

Just as sequences of events produce content, relationships between event sequences and content produce content. The presence or absence of related content causes differences in the content generated by related processes. The presence or absence of a directory prior to running a program that uses or creates it produces a difference in the time associated with the creation of the directory. Similarly, the placement of the directory in the linked lists associated with the file system relative to the placementof files within that directory may indicate the differences in these relationships. There are many such relationships within systems, and those relationships can be explored to challenge the assertions of those who make claims about them.

F.Ordering or Timing

Sequences are a special case of orderings. More generally, orderings can involve things that cannot be differentiated from being simultaneous, whereas sequences are completely ordered. Timing often cannot be established with perfection, but partial orderings can be derived. The possible orders of events can make an enormous difference in some cases. One obvious reason for this is that ordering is a precondition for cause and effect. To assert that one thing caused another, it must be demonstrable thatthe cause preceded the effect. If this cannot be established by timing, there is the potential to challenge based on the lack of a causal basis.

Although this may seem like a highly theoretical argument, many cases have been made or broken by the ability to show time sequences. If an accusation of theft and disclosure of trade secret information is made and it cannot be shown that the theft preceded the disclosure, then the basis for the claim is invalid. If an attacker was supposed to be present at a computer to commit a crime at a particular time and they can show that at that time they were not present at that computer, then the alibi will refute the claim. If the time is uncertain in a computer system, the entire process becomes suspect because computersusually keep time very well. If ordering or timing is missed, the lack of the ordering or timing information leads to challenges. If timing assumptions are made and not validated, they can be questioned.

The most common challenge to compute-related timesstems from the potential difference between a computer clock and the real-world time. Even accounting for time zone variations, this is an all too common problem that has to be addressed in the forensic process. If the time reference for the computer is not established at the time the evidence is collected, timing can sometimes be obtained by relating the timing of events within the computer to externally timed network events. Missed time can sometimes be made up for by correlation with outside events, whereas made time can often be demonstrated wrong by similar correlation. The lack of correlating information represents sloppiness in the collection and analysis process that may itself lead to the inability to determine timing.

A less used challenge stems from the ability to determine ordering of events in the absence of other timing information. For example, there are cases when times and exact sequences could not be determined but orderings could because of overwrite patterns on disks. In one such case it was shown that a file transfer happened before an erasure was done, because the area of the disk where the file would have existed had the erasure happened first was covered with the pattern associated with the erasure. What happened between these events and the precise times they occurred could not be determined, but the ordering could be, and it was one of the pieces in a larger puzzle that determined the outcome of the case.

G.Location

Everything that happens in computers has physicality despite any efforts to portray it as somehow ephemeral. Physicality tends to leave forensic evidence in one form or another. For example, when a person uses a keyboard, particles from hair and skin fall into the keyboard and tend to get stuck there. In a similar fashion,data in computers tends to be placed on the disk and tends to get stuck there. Computer systems have physical characteristics as well, and sometimes they are dead giveaways to location.

In one example of a missed and made location, an attack against a government computer system had an Internet protocol (IP) address associated with a location in Russia. But when observing the traffic patterns shown in log files, it was determined that the jitter associated with packet arrival times was very small. Packet arrival-time jitter tends to occur when packets are mixed in delivery queues across infrastructure. More infrastructure tends to lead to more jitter. The lack of jitter meant that the arriving packets were not being mixed much with other packets and that thecomputer responsible was, therefore, physically close to the observation point. The attack was traced to a point only a few network links away from the surveillance point. Evidence such as this can make a compelling case, and it’s easy to miss the real location and make a false location when such analysis is not thoroughly undertaken.

H.Inadequate Expertise

We also face many low-quality experts and people with an axe to grind who are put up as experts. An excellent example of this was a case involving copyright infringement in which a court-appointed special master made claims including (1) the accuser may have altered data, (2) date and time information was unreliable, (3) the system never worked when returned to its owner after it was forensically investigated, (4) programs were destroyed, and (5) preexisting data was no longer present. In this case, all of these claims were refuted by a better expert who used recorded statements, a videotape of the return process, some details of the physics of writing todisks that dismissed the possibility of forgery, and correlation with other records. It is important to note that, under some circumstances, all of the things asserted by this special master could have been true, so the claims were not outrageous in the general sense. It is only that they were not in fact true in this case.

I.Unreliable Sources

There are a lot of unreliable sources of digital content. For example, the Internet is full of the widest possible range of different content, only a small portionof which is really accurate and a significant portion of which is just plain false. There is a tendency for people to believe some of these things, and once these things are believed, the belief transcends the original source. For example, when looking upinformation about the function of a hardware device or software program, at a detailed level, much of the information on the Internet is not accurate. It might reflect a different version; it might reflect a mistake by the author; it might reflect a simplification by the author for reader convenience; and it might be an intentional or malicious misstatement by a disgruntled ex-employee. Although this information may be convenient or readily available and useful in many circumstances, it is not generally suited to the level of trust required for digital forensic evidence purposes.

As a good example, the question sometimes comes up of the list of all circumstances under which a file access date and time will be altered by a Windows operating system under normal use. It turns out that this is not an easy question to answer. The answer varies on different versions of Windows, different applications may use different system calls for the same outcome with different side effects, and programs that deal with forensic processes typically do things differently than other operating system programs. Even such simple questions do not have easy answers, and the Internet answers are not consistent or accurate in many cases.

J.Simulated Reconstruction

In some recent court cases, computer-based reconstructions of physical events have been used in presentations to juries. In some sense this is no different than the use of storyboards to show how a crime is purported to have happened, but in another senseit can be too realistic in that it can give the appearance of certainty about many things that there is no certainty about. In such a case there are a number of approaches to reducing the impact of such fabricated evidence.

One of the keys to countering this sort of evidence is to use the termsfabricated,fabrication, andfabricating. When referring to this evidence, it should always be identified as a fabrication. Underlying this question is the issue of the prejudicial value as opposed to the probative value. The question is one of differentiating the part of the fabrication that is more probative than prejudicial from the part that is not. Is the use of continuous video more probative or prejudicial than a set of storyboards? The enhancement generated bymotion is, in almost all cases, more prejudicial than probative because the intervening fabrication of motion is highly specific while the real knowledge of the details is almost always very limited. Did the perpetrator use their right or left hand? Did they really bend their elbow like that? Did they trip over an obstruction on the floor? Did their shirt really wrinkle like that?

If the information provided by the fabrication is not demonstrably accurate, it is not relevant and provided without a proper foundation. If the coloring of the face is similar to that of a defendant, this is prejudicial and, unless there is evidence as to the coloring of the face of the perpetrator, green might be a better choice. It is valid to zoom in on the parts of the presentation and ask whether the information at that detailed level is accurate. If the depicted gun is a different sort than the one used in the crime, the gun type should be shown to the finder of fact, and the question should be asked about whether this is evidence that the defendant did not do this crime. The answer will be no, and this gives the opportunity to again point out the fabricated nature of the display and its gross inaccuracy as to the facts. What in fact is real about this fabrication? Can you tell us whether the colors in this fabrication are real? How about the shadows? Is the time on the fabricated clock real? Are the footfalls real? Does this fabrication show any trace evidence being left on the site? What evidence do you have of the height ofthe person in this fabrication?

K.Reconstructing Elements of Digital Crime Scenes

Digital crime scenes can also be reconstructed, and this is a critical area for scientific evidence. Although experts may assert any number of things about what might be within a computer, the ultimate test can often be made through a reconstruction. But even reconstruction of a digital crime scene has its limits. Although similar circumstances can be created, identical ones often cannot. As a rule of thumb, simple questionscan often be answered by digital reconstructions, but complex sequences of events are far harder to confirm or refute.

A simple example where reconstruction is very effective is determining whether or not a file is created by an application in the normalcourse of operation. To test this, it is a simple matter to install the application on a system, operate it normally, and see whether this file is created. It is far harder to make this determination through reconstruction in abnormal operation. An excellent case example of a reconstruction that refuted evidence was a case in which the prosecution asserted that a particular program could be used to extract deleted file content from a floppy disk. The prosecution knew that a utility program by a particular name was present and that this program was commonly used for this sort of operation. The defense did a simple reconstruction. They took the actual program on the defendant’s system and tried to do what the prosecution claimed could be done. This failed. Itturned out that the particular version of this utility program on the defendant’s computer did not have the capability the prosecution claimed it had. Earlier and later versions had this capability, but not the one on the defendant’s system. Case dismissed.

Digital reconstruction can be a powerful tool, but it cuts both ways. There are plenty of cases in which reconstruction confirms rather than refutes the evidence. Indeed, this is one of the great values of doing reconstruction. It tends to get at the truth. The problems with such reconstructions come when they are interpreted too generally or when they are used to try to make claims about complex situations. A good example of the limitations of such reconstructions is any case where many possible sequences of events could have taken place, and these events involve complex interactions between components. The larger the number of possible sequences, the more reconstruction runs are necessary to exhaust the space of possibilities. In cases where exhaustion is not feasible, statistical samples can be taken, but the nature of digital systems makes such statistics highly questionable. The more intertwined the elements are, the more complex the potential sequences become.

In a simple case where the ability of a program to perform a function through the normal user interface is at question, reconstruction is simple and effective. Simply install the specific software on the specific system in question and try to use the interface to generate the desired results. Insome cases complex sequences are required in order to generate specific outputs, but manufacturers and manuals are usually adequate to generate things that are meant to be generated.

If the goal is to prove that a program could have generated an output, itmay be far more complex, depending on the specifics. Whereas some outputs are generated often or predictably, other outputs may be very situation-specific and may involve complex interactions with the environment. Creating the entire environment may be problematic if it involves such things as network events that are usually out of the control of those doing reconstruction. There are forensic technologies that can largely accomplish these sorts of reconstructions, but they are rarely used and difficult toproperly implement.

If the goal is to show that it was impossible for a perpetrator to have entered a computer and performed a function without leaving any evidence, the task may be very difficult. More generally, proving an assertion about something withunlimited numbers of possibilities or disproving something under the assumption of the perfect opponent is very hard and sometimes impossible. But in almost all such cases a demonstration can be constructed to show some subset of the scenarios. If this isdone, the challenge can be made on the basis that the space was inadequately covered.

L.Good Practice in Analysis

It turns out that nobody has yet compiled a widely accepted collection of good practices for analysis of digital evidence. In fact, parts ofthis book and the references provided may be considered as close to such a compilation as you are likely to find.

As a result, all analysis is subject to expert interpretation and challenges of all sorts, and each case will be judged on its merits withoutappeal to some standard, regardless of how tentative it is. But there are some time-tested analysis techniques that should be covered despite the lack of any widely published good practices.

_1._The Process of Elimination

It is generally considered good practice to use the process of elimination. In this process, a list of the possibilities is made and items on the list are eliminated one at a time or in groups for specific reasons that can be backed up by facts. The challenges to the process of eliminationstart with the initial list. Is the list comprehensive? How do you know it is comprehensive? If I find one thing missing, does this invalidate the list as being comprehensive? What if I find two? Are there implicit assumptions in the list? What are they? Are they demonstrably true? What if they are wrong? The next challenge comes in the application of the process. Was the test of each item on the list definitive? Was it properly done? What are the cases in which this test would fail to be revealing? Does apositive or negative result in your test environment represent the same result as what would happen in the real environment? In other words, what are the possible differences between the real world and the test environment? And finally, almost all such tests make assumptions about the independence of the items on the list or the elements within the computer systems involved. Suppose these things are not independent, would that invalidate this test? In many cases these assumptions can be demonstrated to be incorrect under certain circumstances.

_2._The Scientific Method

The basis of the scientific method is that the truth can be verified by the failure of experiments that attempt to disprove an assertion. But even one refutation implies that the hypothesis under test or the testing method was incorrect in some respect.

_3._The Daubert Guidelines

Case law in the United States has led to the Daubert Guidelines for the admissibility and validity of scientific evidence. These almost always apply to digital forensic analysis issues. The tests of scientific evidence in this caseinclude four basic issues: (1) Has the procedure or technique been published? (2) Is the procedure generally accepted? (3) Can and has the procedure been tested? (4) What is the error rate of the procedure?

Most digital forensic analysis methods in use today have not been widely published. Those that are published are rarely published in referenced scientific journals. There are several books on this subject and more such books are being written. In addition to these publications, there are manuals from products and books on special purpose topics. Finally, hardware and software design and source code information is often available to those properly trained to understand it. These then form the literature in this area. The lack of published material leads tomany challenges to the admissibility or validity of this evidence. Perhaps most importantly, most of the forensic examination and analysis tools that are made for sale include trade secrets and unpublished content that form the intellectual property basisthat creates competitive advantages and barriers to entry in the market. As a result, the most commonly used tools do not include the information required to determine what precisely they do. Their use and their results can be strongly challenged on thisbasis. Similarly, file formats, hardware device operations, and similar functions of components are not known at the most detailed level, and their operation is not published.

In terms of being generally accepted, there are few generally accepted analysistechniques for digital forensic evidence. In some sense, without publication, general acceptance is impossible, but on a more general basis, almost any presentation of analysis of digital forensic evidence is challengeable on this basis. For example, if aforensic analyst asserts that a file contains some specific data and was created at some particular date and time, in addition to the technical limitations of this assertion, the methods used to derive this information can be questioned in great detail totry to shake confidence in the validity of the technique undertaken. The problem with such a challenge is that it risks alienating the finder of fact and is likely to come down to things that users do every day but that are not documented as forensic processes. This approach is more applicable in cases where the technique is believed to have produced wrong results. In such cases, wrong results are usually easily demonstrated by following the specific steps taken by the examiner.

Public testing of analysis techniques has not been done to date. Although the United States NIST is undertaking tests of forensic imaging processes, analysis techniques are essentially only tested today by the individuals doing work in this area. The tests undertaken can usually be described by the forensic examiner, but they are likely to be very limited. It is reasonable in most cases to challenge the tests done to validate the technique used by the examiner, but the import of this depends heavily on the evidence being presented. Ifthe person presenting the evidence has not tested their own tools, they will probably be hard-pressed to show that it has been tested elsewhere, and the credibility of the technique and the person applying it can be challenged.

The error rate of forensic analysis tools is even harder to attest to, because in almost all commercial products no error rate can be established without independent tests. As a result, although error rates for such things as cryptographic checksums on forensic images can be estimated, error rates on disk searches and depictions of images based on file content are far harder to ascertain. The problem with this approach to challenging evidence is that the underlying digital technology is very good at nearlyerror-free operation. Although there are almost always errors in the programs implementing any digital forensic process, these errors are not apparent, and similar processes can be undertaken with independent software to mitigate against these challenges.Again, challenges here should be made only in cases where there is a good reason to believe that the resulting facts are in error.

_4._Digital Data Is Only a Part of the Overall Picture

Almost every analysis of digital forensic evidence ultimately involvesties to the real world. In order for analysis to produce meaningful results, it must tie those results to the matter at hand. The analysis process as a technical matter can often be resolved, and some set of resulting bit sequences with some time sequencing can be revealed to the finder of fact without significant disagreement. In fact, this is often done by stipulation, subject to an adequate presentation. However, the interpretation of those results is subject to far more variation than the setting of thebits.

The attribution of actions to actors is often hard to pin down. Although there are cases in which there are films of people using their computers and typing the material of interest to a legal matter, this is certainly the exception rather than therule. Attribution has been and continues to be studied, and there are many indications that behavioral and biometric indicators can be used to attribute actions to actors. However, the amount of scientific study in this area to date is limited, and the results are far from definitive. Furthermore, the characteristics used in attribution are usually tied to time sequences and interaction sequences. For example, different keyboards produce different error rates and error types for typists with different training and experience, but if all we have is a spell-corrected end document with no data-entry sequences, these errors will not appear in the analysis. This is often a basis for challenge, and in some cases it is highly successful.

Physical evidence can oftenbe tied to digital evidence. For example, an online credit card theft may be challenged, but if the credit cards stolen resulted in purchases delivered to the defendant’s address, and the defendant did not question these items, try to return them, and isusing them, the computer evidence of the theft may be hard to discredit. On the other hand, the lack of a nexus with real-world events should lead to a serious question about validity. Just because someone wrote about a credit card fraud scheme does not mean they perpetrated one. Even if their computer was involved in one that is similar to their writings, the lack of a physical nexus is a potentially fatal flaw.

Means, motive, and opportunity apply to the digital world as well as the analog one. If the evidence shows a level of expertise in developing and hatching a scheme, and the individual on trial does not have the necessary expertise, the means does not exist. Digital systems are complex, and a great deal about the knowledge of individuals is often revealed by the audit records and software present on the system under examination. Opportunity in computers does not always require presence. Because of the potential for telepresence in a networked computer, an analysis of events in a computer do not alwaystie the individual to the events or the events to the presence of any individual. Making or missing these times is commonplace.

_5._Just Because a Computer Says So Doesn’t Make It So

This is perhaps my favorite basis for challenging the analysis of digital forensic evidence. The seeming infallibility of computers leads many to believe that, if a computer says, so it must be so. People are increasingly realizing that this is not true, but the point still must be made in many cases. The sources of errors in computers are wide-ranging. From computer viruses that leave pornographic content in computers, to remote control via Trojan horses that allow external users to take over a computer from over the Internet, to just plain lies typed in by human beings, computers are full of wrong information. In some studies of data-entry errors, rates on the order of 10% to 20% are common. That is, 1-in-5 to 1-in-10 data entries in a typical commercial database are not complete, accurate, and reflective of reality.

VIII. Overall Summary

The number of ways that digital forensic evidence can be challenged is staggering, and many of these challenges can be successful in the proper circumstances. But a competent digital forensics process and examiner can avoid all of these challenges by diligent efforts and thorough consideration of the issues.

Avoiding all faults is impossible, but almost all failures can be avoided by prudent efforts. When faults occur, they may or may not produce failures. And some failures are recoverable, whereas others are not. At steps in the process where faults lead to failures that are not recoverable, special care should be taken to avoid these faults.

Limits of budget, training, tools, and simple human errors have many effects on the challenges to digital forensic evidence, and those who wish to use this evidence should take note of the need for overcoming these limits within their organizations.

Those who seek to challenge such evidence have an equally daunting task because of the widespread perception of computers as perfect, which leads to excessive belief in the content within them. But as more and more people have identities stolen, computerized records create financial problems, computer failures cause missed flights, and fraudulent spam e-mails sent to them, this will change. Planting the seeds of distrust in computers and computer evidence is the basis of any challenge, and these seeds exist for those who seek to find them.

When those engaged in the forensic process miss or make, through accident or intent, it is the job of those who see these faults to point them out and act to correct any failures that may result. A healthy forensic process seeks to poke holes in its own system in order to improve it and seeks ways to compensate for holes it identifies. Hopefully these challenges will be met by those in the digital forensics community so that these challenges never have to rear their ugly heads again.

| Strategic Aspects in International Forensics | 8 |

|---|---|

DARIO FORTE, CFE, CISM

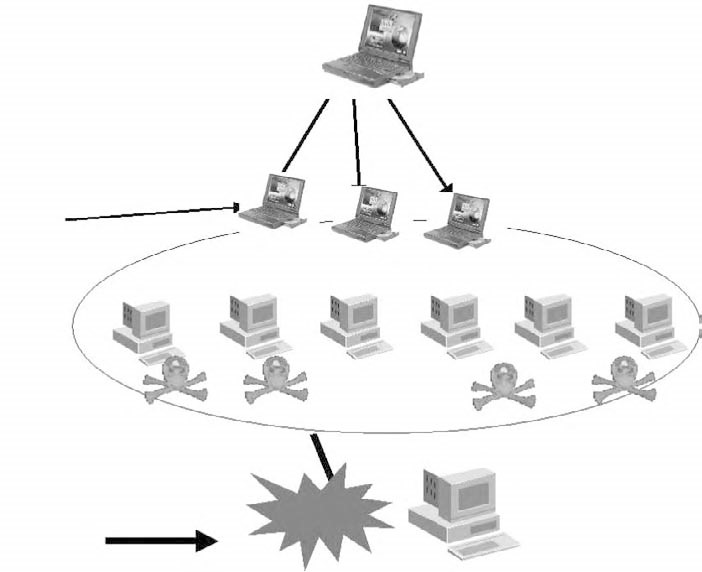

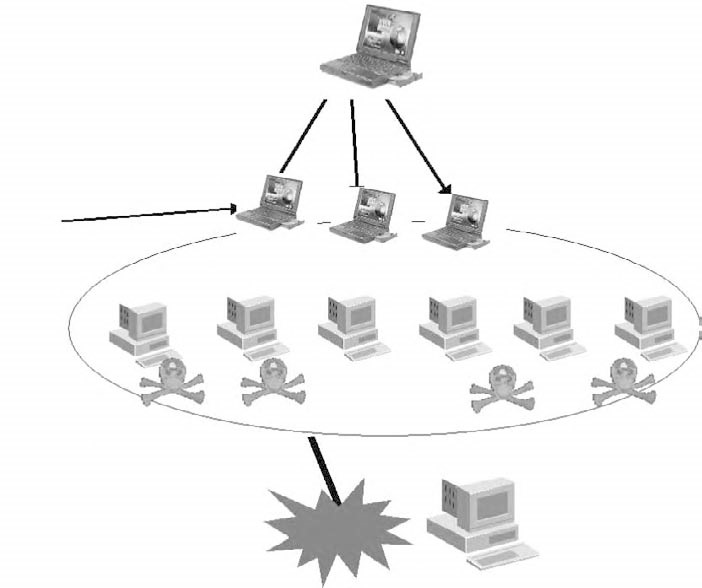

I.The Current Problem of Coordinated Attacks